Search

Affiliation Type

Methodologies

Applications

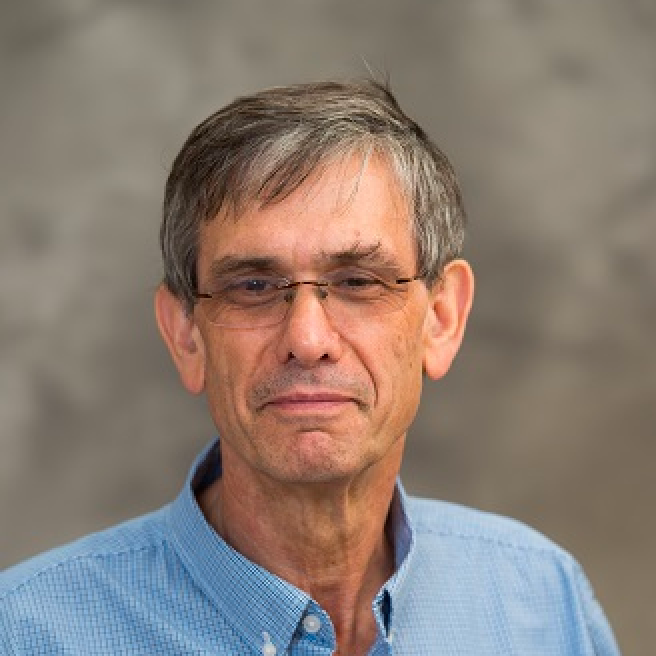

Moulinath Banerjee

Professor, Biostatistics, Statistics, LSA

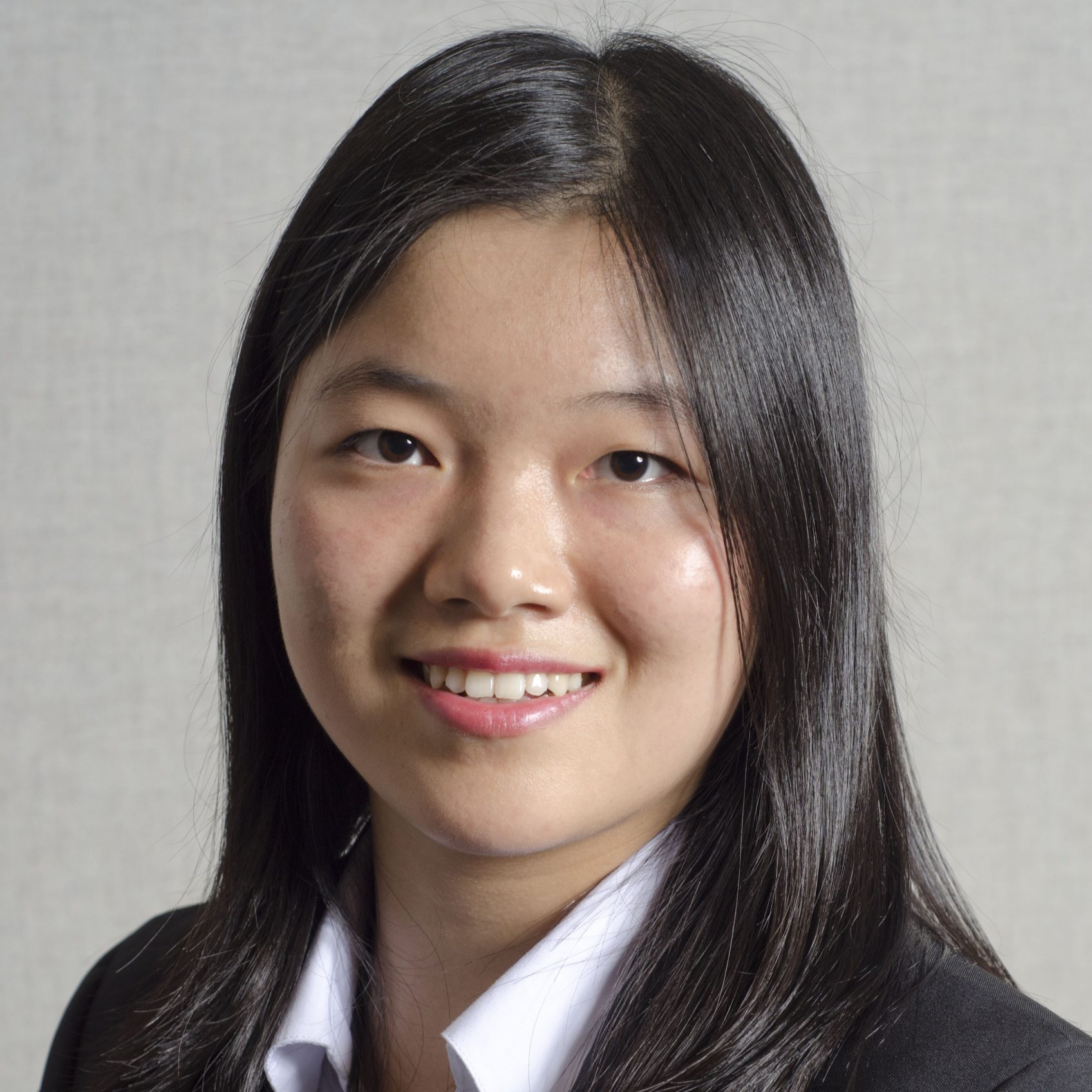

Yang Chen

Assistant Professor, Statistics, LSA

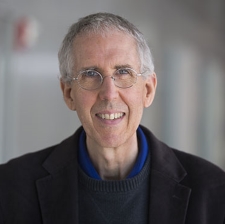

Fred Feinberg

Professor, Marketing, Ross School of Business Statistics, LSA

Rich Gonzalez

Professor, Psychology, LSA Statistics, LSA Marketing, Ross School of Business

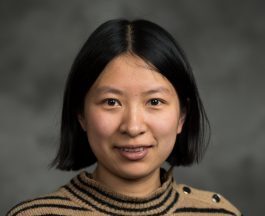

Xuming He

Professor, Statistics, LSA